🧚🏻️ YiVal

Website · Producthunt · Documentation

⚡ Build any Generative AI application with evaluation and improvement ⚡

🤔 What is YiVal?

YiVal is an GenAI-Ops framework that allows you to iteratively tune your Generative AI model metadata, params, prompts and retrieval configs all at once with your preferred choices of test dataset generation, evaluation algorithms and improvement strategies.

Check out our quickstart guide! →

📣 What's Next?

Expected Features in Sep

- Add ROUGE and BERTScore evaluators

- Add support to midjourney

- Add support to LLaMA2-70B, LLaMA2-7B, Falcon-40B,

- Support LoRA fine-tune to open source models

🚀 Features

| 🔧 Experiment Mode: | 🤖 Agent Mode (Auto-prompting): | |

|---|---|---|

| Workflow | Define your AI/ML application ➡️ Define test dataset ➡️ Evaluate 🔄 Improve ➡️ Prompt related artifacts built ✅ | Define your AI/ML application ➡️ Auto-prompting ➡️ Prompt related artifacts built ✅ |

| Features | 🌟 Streamlined prompt development process 🌟 Support for multimedia and multimodel 🌟 Support CSV upload and GPT4 generated test data 🌟 Dashboard tracking latency, price and evaluator results 🌟 Human(RLHF) and algorithm based improvers 🌟 Service with detailed web view 🌟 Customizable evaluators and improvers |

🌟 Non-code experience of Gen-AI application build 🌟 Witness your Gen-AI application born and improve with just one click |

Model Support matrix

We support 100+ LLM ( gpt-4 , gpt-3.5-turbo , llama e.g.).

Different Model sources can be viewed as follow

| Model | llm-Evaluate | Human-Evaluate | Variation Generate | Custom func |

|---|---|---|---|---|

| OpenAI | ✅ | ✅ | ✅ | ✅ |

| Azure | ✅ | ✅ | ✅ | ✅ |

| TogetherAI | ✅ | ✅ | ✅ | ✅ |

| Cohere | ✅ | ✅ | ✅ | ✅ |

| Huggingface | ✅ | ✅ | ✅ | ✅ |

| Anthropic | ✅ | ✅ | ✅ | ✅ |

| MidJourney | ✅ | ✅ |

To support different models in custom func(e.g. Model Comparison) , follow our example

To support different models in evaluators and generators , check our config

Installation

pip install yivalDemo

Colab

| Demo | Supported Features | Colab Link |

|---|---|---|

| 🐯 Craft your AI story with ChatGPT and MidJourney | Multi-modal support of text and images. | |

| 🌟 Evaluate different LLM Model Performance With Your Own Q&A Test Dataset | Easy model evaluation and comparison against 100+ models, thanks to LiteLLM. It provides a benchmark of model performances tailored to your customized use case or test data. | |

| 🔥 Startup Company Headline Generation Bot | Automate prompt evolution | |

| 🧳 Build Your Customized Travel Guide Bot | Automate prompt generation by retrieving the most related popular prompt from the community. e.g. awesome-chatgpt-prompts | |

| 📖 Build a Cheaper Translator: Let GPT-4 Teach Llama2 to Create an Cheaper Translator | Use GPT-4-generated test data to fine-tune the translation bot of Llama2 with Replicate. 6% sacrifice in performance, 18x save in cost. | |

| 🤖️ Chat with Your Favorite Characters - 澹台烬 from《长月烬明》 | Give your character a soul with automated prompt generation and character scripts retrieval |

Multi-model Mode

Yival has multimodal capabilities and can handle generated images in AIGC really well.

Find more information in the Animal story demo we provided.

yival run demo/configs/animal_story.ymlBasic Interactive Mode

To get started with a demo for basic interactive mode of YiVal, run the following command:

yival demo --auto_promptsOnce started, navigate to the following address in your web browser:

http://127.0.0.1:8073/interactive

For more details on this demo, check out the Basic Interactive Mode Demo.

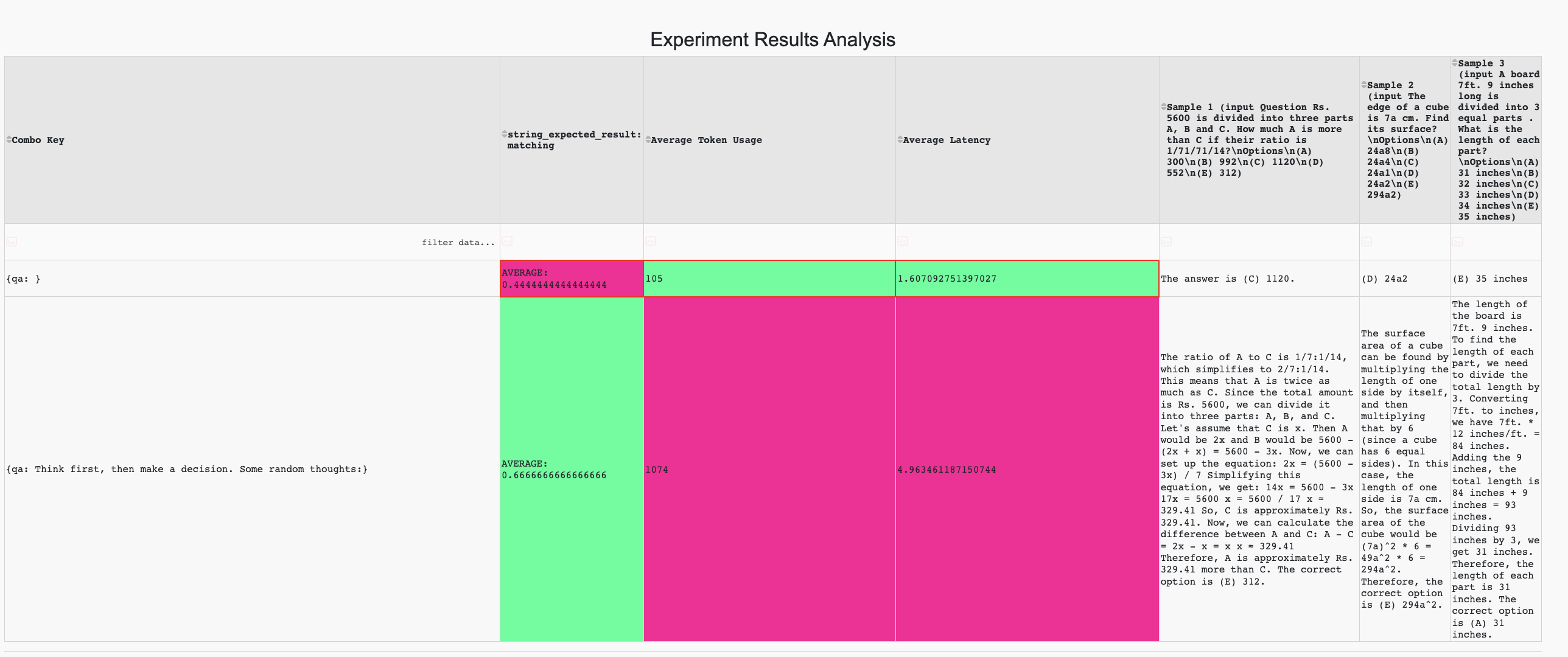

Question Answering with expected result evaluator

yival demo --qa_expected_resultsOnce started, navigate to the following address in your web browser: http://127.0.0.1:8073/

For more details, check out the Question Answering with expected result evaluator.

Automatically generate prompts with evaluator

yival demo --basic_interactiveOnce started, navigate to the following address in your web browser: http://127.0.0.1:8073/

Contributors

🌟 YiVal welcomes your contributions! 🌟

🥳 Thanks so much to all of our amazing contributors 🥳

Paper / Algorithm Implementation

| Paper | Author | Topics | YiVal Contributor | Data Generator | Variation Generator | Evaluator | Selector | Evolver | Config |

|---|---|---|---|---|---|---|---|---|---|

| Large Language Models Are Human-Level Prompt Engineers | Yongchao Zhou, Andrei Ioan Muresanu, Ziwen Han | YiVal Evolver, Auto-Prompting | @Tao Feng | OpenAIPromptDataGenerator | OpenAIPromptVariationGenerator | OpenAIPromptEvaluator, OpenAIEloEvaluator | AHPSelector | OpenAIPromptBasedCombinationImprover | config |

| BERTScore: Evaluating Text Generation with BERT | Tianyi Zhang, Varsha Kishore, Felix Wu | YiVal Evaluator, bertscore, rouge | @crazycth | - | - | BertScoreEvaluator | - | - | - |

| AlpacaEval | Xuechen Li, Tianyi Zhang, Yann Dubois et. al | YiVal Evaluator | @Tao Feng | - | - | AlpacaEvalEvaluator | - | - | config |

| Chain of Density | Griffin Adams Alexander R. Fabbri et. el | Prompt Engineering | @Tao Feng | ChainOfDensityGenerator | config |